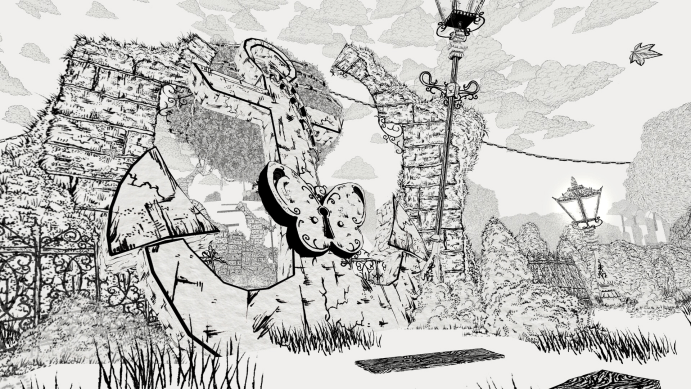

When I pushed the cocktail named “Hazy Consensus” over the bar, it accurately showed the “gray blue between lies and compromises” described by the customer. The liquor swayed under the neon light, and a piece of ice made of synthetic spices that never melts floated on the top. The customer drank it all, and his pupils widened slightly in the next 30 seconds, and the vigilance in his voice retreated like a tide — he began to tell me that his company, the spiritual technology giant “Extraordinary Network”, was secretly implementing a plan that would eliminate the “negative emotions” of all mankind. And I, the bartender Donovan, wiped the glass and transmitted the conversation in real time through the line hidden in the retro radio to the hacker friend upstairs who was shaping memories with clay. _The Red Strings Club_ made me understand that in this cyberpunk world, the most dangerous weapon is not an electromagnetic gun, but a just-right glass of wine, a carefully guided dialogue, and a piece of wet clay that carries other people’s lives.

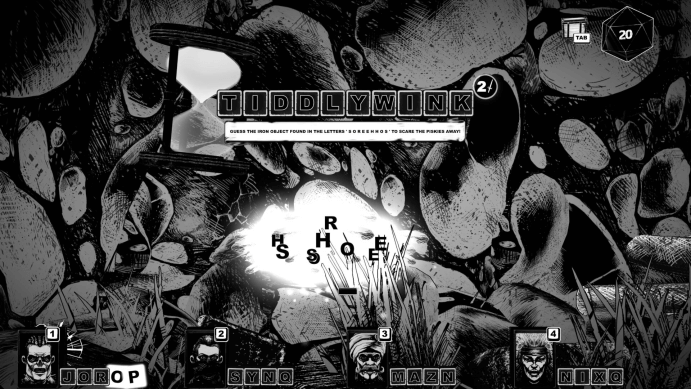

The game switches me between three interrelated but completely different characters: Donovan, in the underground bar called Red String Club, pry open the hearts of customers with wine-misting skills; Akara, a top hacker who has lost his legs, implants or deletes memories for others by remotely controlling the pottery wheel; and Brand, a genetic engineer who works for the “extraordinary network” and gradually becomes suspicious of his own creations. My tools are not firearms or codes, but dialogue trees, wine recipes and pottery shapes. Each interaction directly points to the core question of the game: Do we have the right to design, edit or even delete human emotions and memories for “greater happiness”?

Bartending is the first form of “invasion”. Every customer who comes to the bar has certain emotional needs — anxiety, loneliness, arrogance and remorse. My job is to speculate its essence through dialogue, and then make a glass of drink that can “catalyze” its true intention from limited base wine (which corresponds to basic emotions such as love, fear and trust respectively) and additives. This process is not a simple puzzle pairing, but a subtle psychological side writing. A middle-level executive of the company who claimed to want “courage” may have expressed his fear and obedience to systemic injustice after drinking the wine that strengthened “decision”. The result of the wine will permanently change the subsequent dialogue options and narrative branches. I’m not just serving, I’m using chemistry and rhetoric to carefully sculpt an upcoming “confession”.

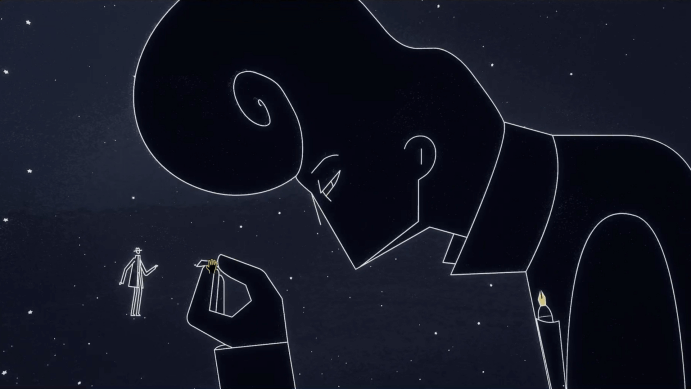

When I switched to Akara’s pottery studio, this kind of “sculpture” became more literal. I receive the target’s emotional data stream through the intrusive neural interface, which are presented on the screen in abstract colors and waveforms. My task is to use clay to shape an object that symbolizes the character’s core memory or emotional entanglement — for example, to shape the first circuit board repaired by a tangled engineer in his childhood; to shape a stray cat for a guilty security guard that he failed to save. The process of shaping is the process of understanding and intervention: the more accurate and emotionally resonant the shaping, the more likely it is to unlock key information or change the behavioral logic of the character in the subsequent aphorism. Pottery is no longer an artistic material here, but a medium of empathy, a kind of concrete hand that touches the scars of other people’s souls.

The sharpest criticism of the game focuses on the “social and psychological optimization” plan of the “extraordinary network”. The plan aims to eliminate human aggression, jealousy and depression through genetic adjustment and nerve intervention, and create a “harmonious” society. Brand’s storyline made me personally experience the temptation and horror of this kind of “goodwill tyranny”. As an engineer, I need to select “optimized” traits for gene samples, and each choice is accompanied by tempting data enhancement and slow paralysis of morality. When I finally faced the “perfect” prototype designed by myself and my emotions were castrated, the inhuman calmness was more heart-pounding than any ferocious monster.

_The Red String Club_ didn’t give me a simple plan to save the world. Its multiple endings depend on the countless subtle choices I made in bartending, pottery and dialogue: should I expose the truth and cause chaos, or acquiesce to optimization in exchange for superficial peace? Do I use information to manipulate others to achieve my goals, or do I stick to an almost naive respect for individual autonomy? After customs clearance, I can’t forget the wine recipe called “Kiss of Oblivion” made by myself for a long time — it can make the drinker forget a painful memory forever. Is this kindness or a deeper deprivation?

At the end of the game, the neon lights were still flashing in the rain, and lazy jazz was still flowing in the Red String Club. But what it left to me was a series of stinging questions about emotional authenticity, memory ownership and technical ethics. It made me realize that in an era when technology is enough to touch the edge of the soul, the biggest moral dilemma may not be how to fight against obvious evil, but how to be wary of those gentle and thorough emotional colonization wrapped under the “good for you”. After all, when happiness can also be standardized, what we lose may be the kind of “taste” that makes life worth living, sour, sweet, bitter and spicy, chaotic and real.